Bringing order to AI in your Organisation with Sebastian Luge

In this recent webinar, our Head of Partnerships and Alliances Gregory Pepper hosted Sebastian Luge from cidpartners - integrating perspectives to explore a question many organisations are now facing:

How do you bring order to AI in your organisation?

Sebastian Luge is an Associate Partner at cidpartners, based in Hamburg, Germany. He supports organisations, leaders and teams in complex change processes at the intersection of strategy, culture, leadership and technology. His perspective on AI is deliberately human-centred. He sees it not just as a technological shift, but as a catalyst for new ways of working, evolving roles and modern leadership.

Very early in the conversation, he makes a point that reframes the topic:

“AI transformation brings not only technological change, but most importantly human and organisational change.”

A simple question that reveals the problem

Sebastian starts with a question that sounds almost trivial:

Who is responsible for the quality of AI outputs in your organisation?

In practice, this is where things begin to break down.

Responsibility is often unclear, implicit, or simply not defined. And as AI becomes embedded in everyday work, this lack of clarity quickly turns into operational risk.

AI adoption quickly becomes an organisational challenge

To make sense of what organisations are experiencing, Sebastian introduces a four-stage model. What starts as a simple productivity tool quickly becomes a question of organisational design.

Stage 1. Individual use

AI is used by individuals to support daily tasks. Writing emails, analysing data, preparing content. At this stage, the impact is local, but governance questions already appear.

Leaders start to face very practical questions:

- What tools are allowed or not allowed?

- What data can be shared with AI tools?

- What are the boundaries of acceptable use?

Even at this early stage, one tension becomes clear. Governance cannot be entirely uniform. The rules that apply in HR, for example, are not the same as in marketing or product teams.

Stage 2. Team integration

AI becomes embedded in how teams work. Shared assistants are created. Outputs start influencing collective decisions and workflows.

“In many organisations, teams are already using AI assistance, but nobody knows.”

This is where visibility starts to break down and responsibilities become unclear.

New questions emerge:

- Who is allowed to create or configure AI assistants?

- Who is responsible for the quality of their outputs?

- Who maintains and updates them over time?

Sebastian highlights how roles begin to shift here. A task like producing a report may be automated, but the responsibility does not disappear. It moves towards interpretation, validation and decision-making.

If this evolution is not made explicit, organisations continue to operate with outdated assumptions about who does what.

Stage 3. Cross-team workflows

AI starts connecting teams and automating parts of workflows across the organisation. Information flows between functions. Decisions rely on outputs that no single person fully owns.

At this stage, coordination becomes more complex and the need for clear ownership becomes critical.

“At this stage, AI becomes an organisational design task.”

The questions are no longer just about tools, but about structure:

- Who owns an AI-supported workflow that spans multiple teams?

- Where does decision-making authority sit?

- How are responsibilities distributed across roles and teams?

- How do we ensure alignment across different parts of the organisation?

Without clear answers, bottlenecks appear. Decisions slow down. Accountability becomes blurred.

Stage 4. Business model transformation

AI begins to reshape how the organisation creates value. It influences products, services and even how the organisation operates in its market.

At this point, the challenge becomes more strategic:

- How does AI change our value proposition?

- What new roles or capabilities do we need to build?

- How do we organise to continuously adapt, rather than react?

The question is no longer how to use AI, but how to structure the organisation to evolve with it.

When AI connects teams, structure becomes critical

As AI starts to operate across teams, complexity increases.

Workflows become interconnected. Data flows across functions. Decisions are influenced by outputs that no single team fully controls.

This is where the nature of the problem changes.

It is no longer enough to define rules or guidelines. Organisations need clarity on responsibilities, decision-making rights and interfaces between teams.

Without this, bottlenecks emerge. Decisions slow down. Accountability becomes blurred.

The hidden risk is misalignment

A key idea Sebastian introduces is that of shared mental models.

This is the shared understanding of how work is done, who is responsible for what, and how decisions are made.

When this shared understanding is strong, coordination improves and teams can act with confidence.

When it is not, friction increases.

AI accelerates this problem because it introduces changes that are often invisible.

“If changes are not made visible, shared mental models erode.”

People continue to operate based on how things used to work, even though the underlying reality has changed.

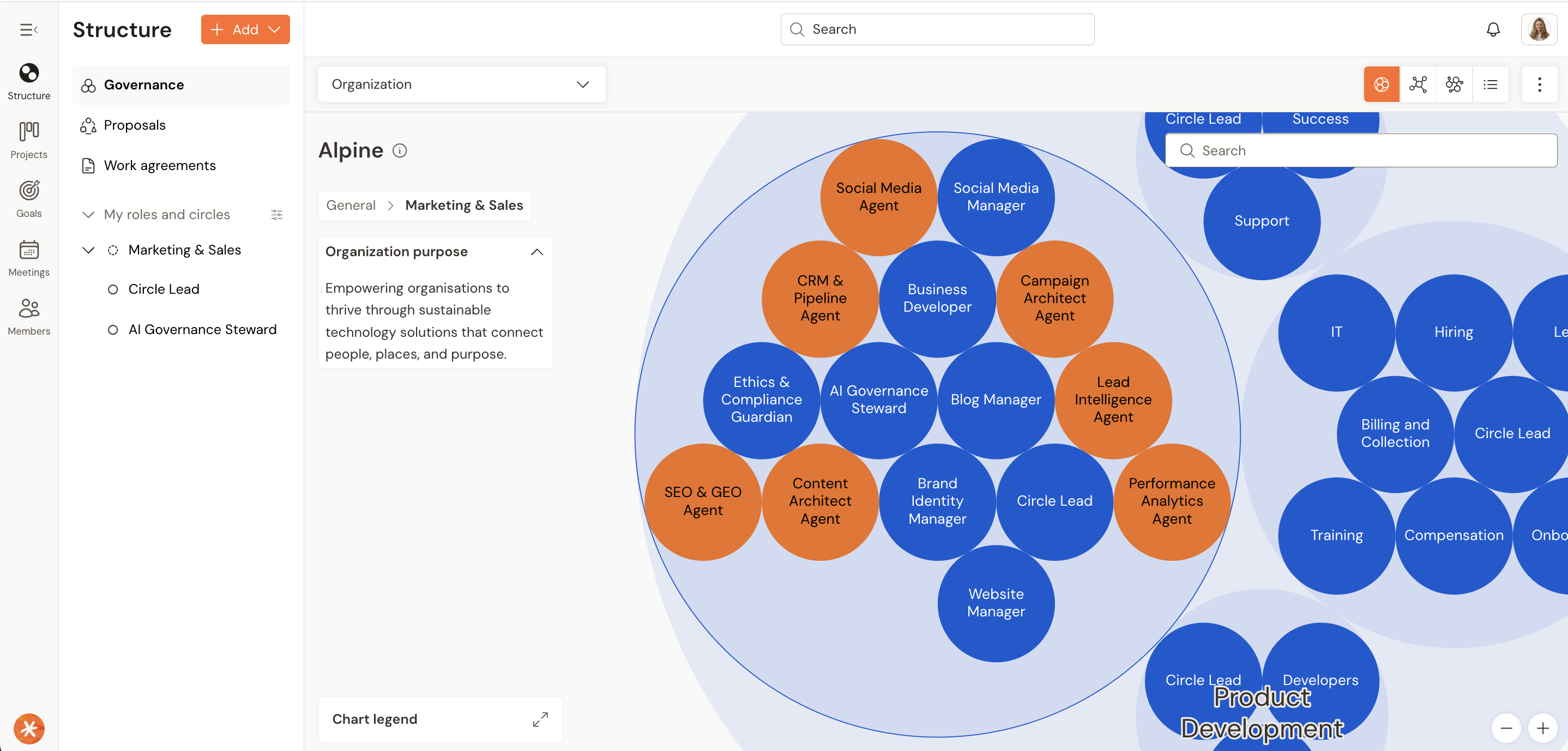

Why role-based organisations are better equipped

One of the most practical implications of AI adoption is how quickly roles begin to evolve.

Tasks are automated. Responsibilities shift. New work appears. Some disappears.

If roles are implicit or tied too closely to job titles, this creates confusion.

Sebastian highlights a key distinction:

“A role is not a person, and a person is not their role.”

Roles describe work. They can evolve. People bring skills. They can take on different roles over time.

When organisations do not separate the two, any change to a role can feel like a personal threat. When they do separate them, change becomes easier to navigate.

This is where role-based organisations have a clear advantage.

Because roles are explicitly defined and regularly updated, they can adapt as work changes. New responsibilities, such as maintaining AI assistants or validating outputs, can be integrated without redesigning the entire organisation.

Instead of reacting with large restructures, organisations can evolve continuously.

Without this clarity, AI creates a gap between how work is actually done and how the organisation thinks it is done.

And that gap is where friction, inefficiency and misalignment grow.

The real bottleneck is not technology

Many organisations are experimenting with AI.

They run pilots. They test tools. They explore use cases.

But they struggle to move beyond that stage.

Sebastian’s observation is clear.

“The challenge is not the technology. It is bringing it into a productive environment.”

Without clarity on how work is structured, experimentation does not translate into execution.

Where to start

The first step is not a large transformation programme.

It is to make the current reality visible.

What tools are already being used? Where? By whom?

In many organisations, AI is already present. It is just informal and invisible.

From there, clarity can be built step by step. Responsibilities can be defined. Governance can be introduced. Structures can evolve.

Conclusion: clarity is what brings order

As AI progresses towards deeper integration and even business model transformation, one thing becomes clear.

Technology alone is not the differentiator.

The ability to adapt is.

And that ability depends on clarity.

“AI doesn’t transform organisations. Clarity does.”

Bringing order to AI is not about controlling the tools. It is about making work visible, defining responsibilities, and continuously adapting how the organisation operates.

For organisations willing to do that, AI becomes not a source of confusion, but a catalyst for better ways of working.

Révélez le potentiel de votre organisation.

Nous sommes là pour répondre à toutes vos questions et vous accompagner dans vos projets.

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)