AI Governance Isn’t a Compliance Project. It’s Your Competitive Edge. Here’s How to Build It.

Deloitte’s 2026 State of AI report is clear: only 21% of companies have mature AI governance. The other 79% are flying blind into the agentic era.

There’s a stat buried in this report that should keep every CEO and board member up at night.

74% vs. 21%

of companies plan to deploy AI agents within two years, yet only 21% have mature governance to manage them.

Three-quarters of enterprises are about to hand decision-making authority to machines — booking meetings, drafting communications, modifying systems, triggering workflows — without clear rules about who’s accountable, what decisions require human approval, or how to audit what went wrong.

This isn’t a technology problem. It’s an organisational design problem. And it’s one that leaders can start solving today, with concrete structural changes to how their organisations operate.

Why AI Governance Is the New Strategic Growth Lever

The instinct among many leadership teams is to treat AI governance as a brake, something IT, legal and compliance teams worry about while the rest of the organisation moves fast. Deloitte’s data says the opposite.

The report finds that enterprises where senior leadership actively shapes AI governance achieve significantly greater business value than those delegating it to technical teams alone. Governance isn’t the thing slowing you down; the absence of it is. Without clear guardrails, teams stall at the pilot stage. They can’t move AI into production because nobody has defined the rules: who reviews the output, what data is permissible, how decisions are escalated, or when a human must intervene.

The top concerns executives cite about AI aren’t about the technology itself. They’re about the organisational context around it: data privacy and security (73%), regulatory compliance (50%), governance capabilities and oversight (46%), and model quality and explainability (46%). Every one of these is a people-and-process challenge dressed up as a tech concern.

The C-Suite Takeaway

AI Governance done right doesn’t slow AI adoption, it accelerates it by removing the ambiguity that causes teams to freeze or operate in chaos.

Five Pillars of AI Governance You Can Build Today

Governance doesn’t have to be an abstract framework that lives in a document no one reads. It can become a living operating system embedded in the tools your people use every day.

Here are five concrete pillars, and this is how you can make each one operational inside your organisation using Talkspirit:

1. Define Decision Boundaries Before You Deploy a Single Agent

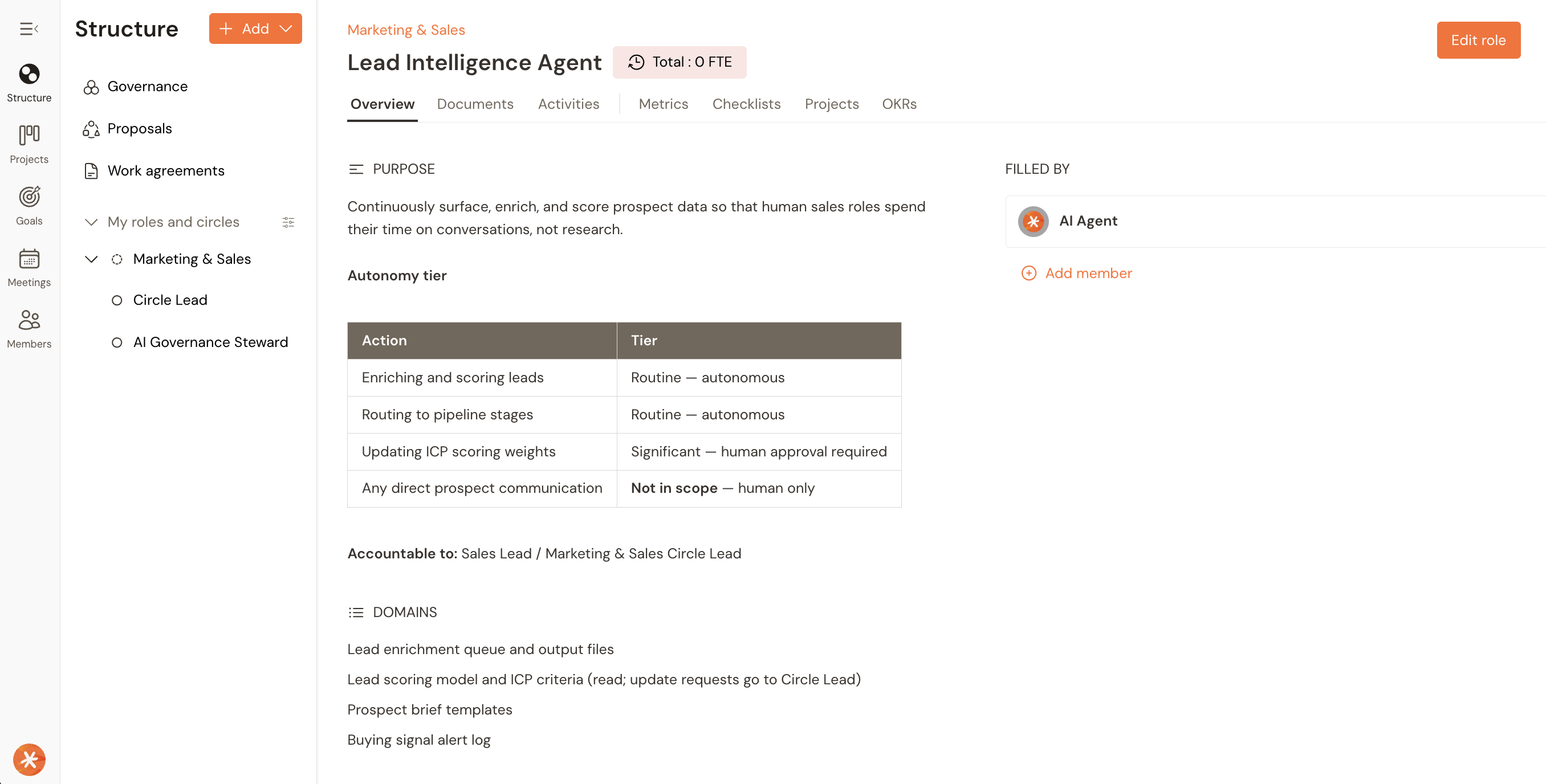

The biggest governance gap Deloitte identifies isn’t about technology, it’s about organisational clarity. Organisations are deploying AI agents without defining which decisions they can make autonomously, which require human approval, and which are off-limits entirely.

What you can do this week:

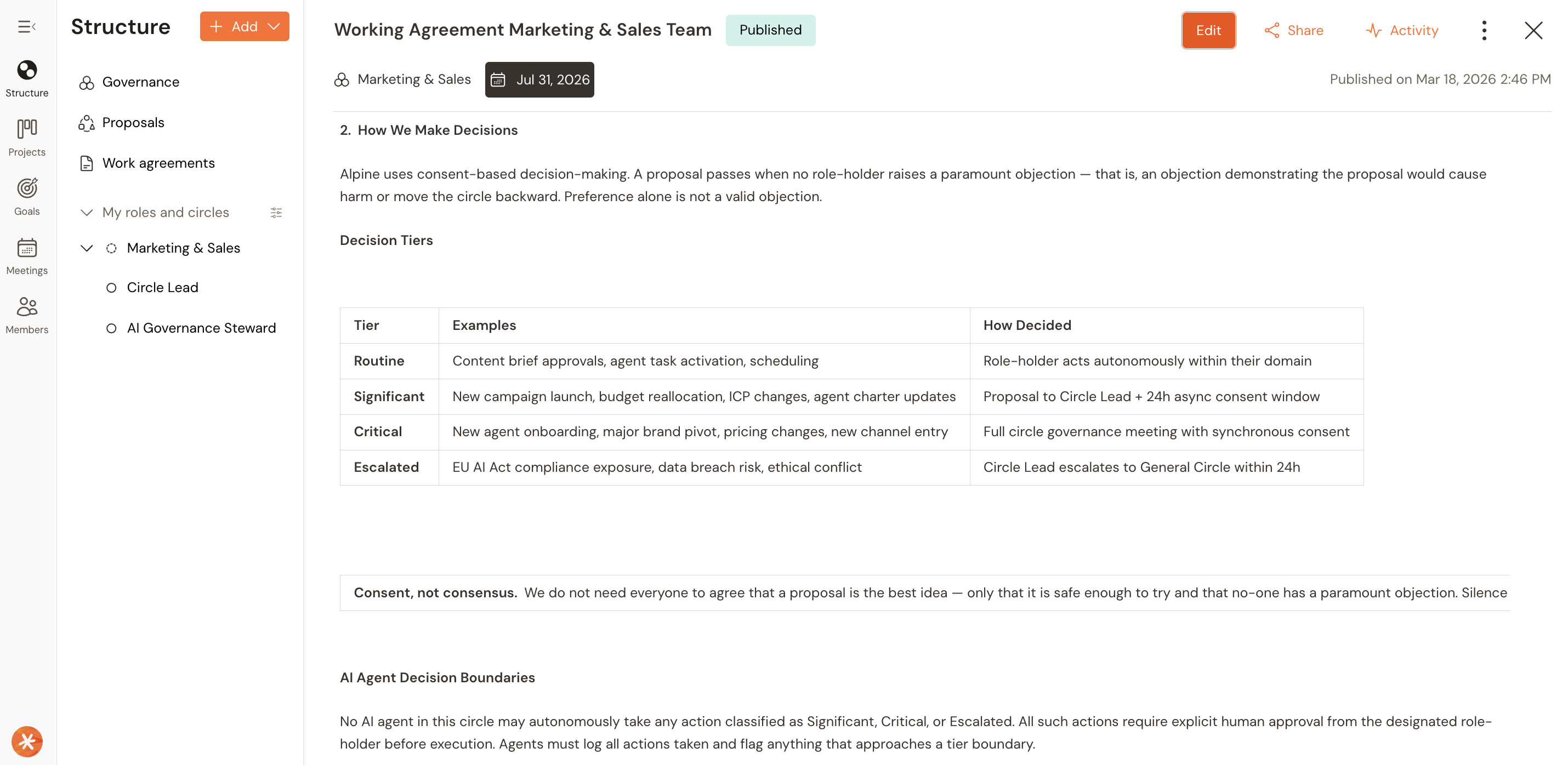

Create a three-tier decision framework for every AI use case.

Tier 1: the agent acts autonomously (low-risk, reversible actions like scheduling or summarising).

Tier 2: the agent recommends, a human approves (customer communications, budget allocations, process changes).

Tier 3: human-only (strategic decisions, legal commitments, people decisions).

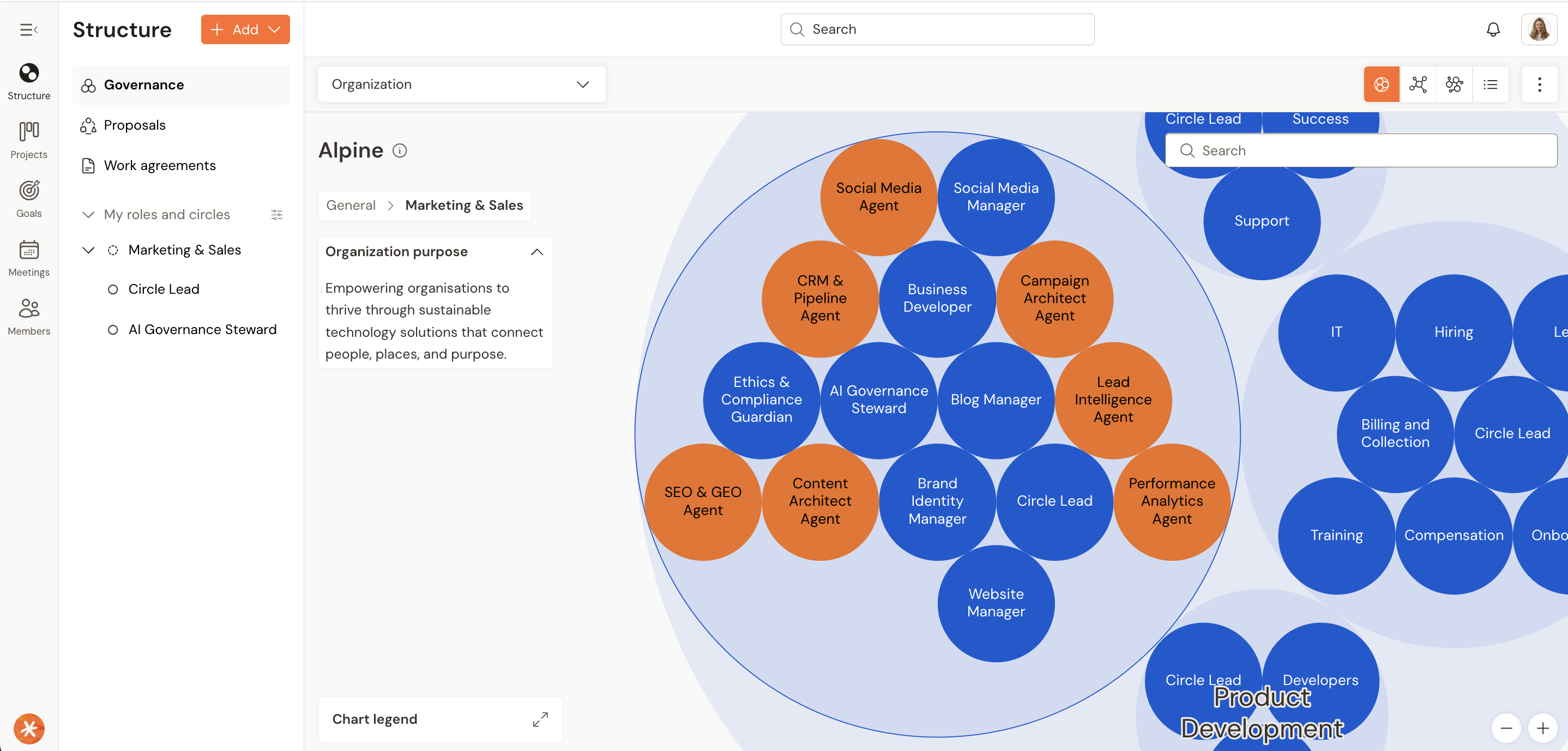

How to operationalise it in Talkspirit

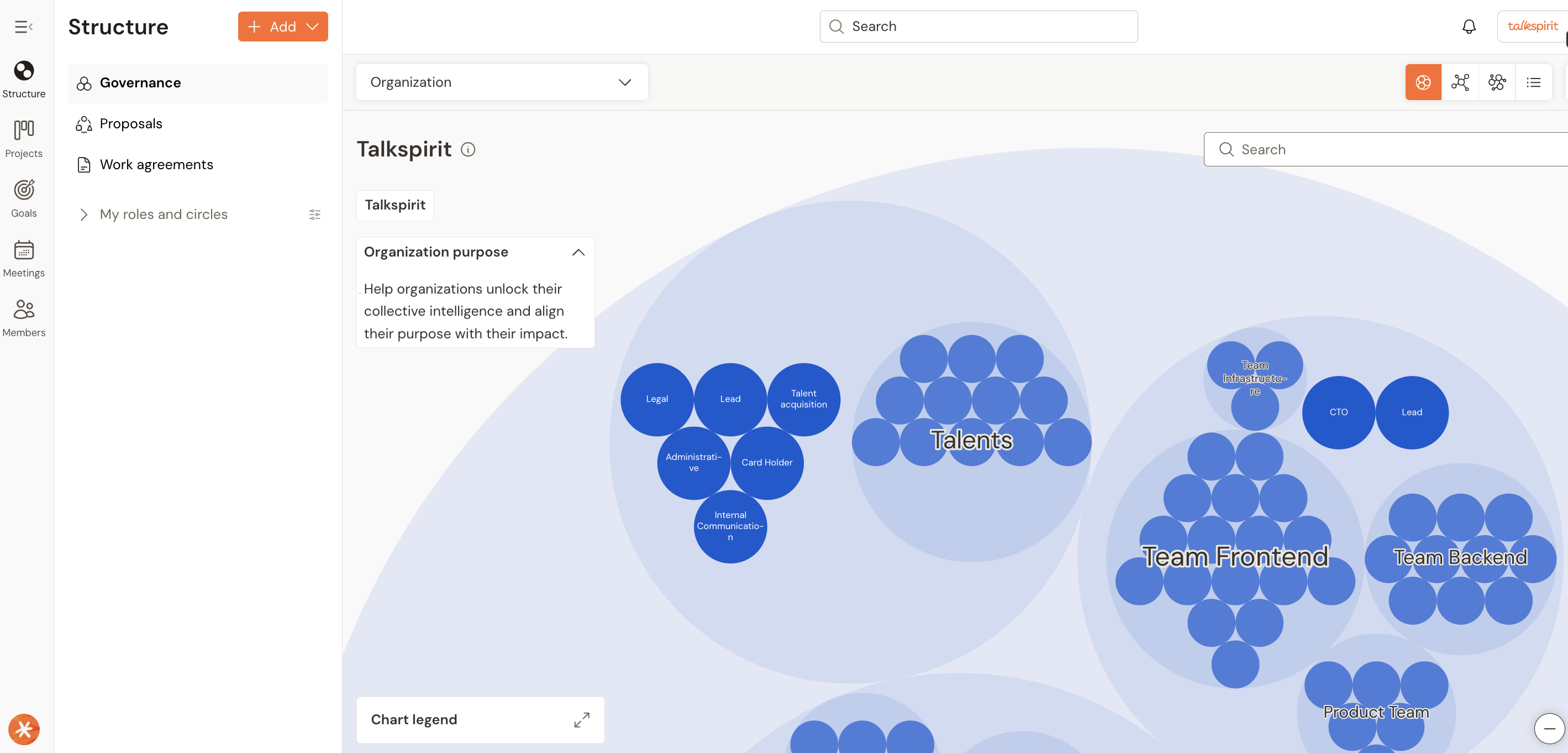

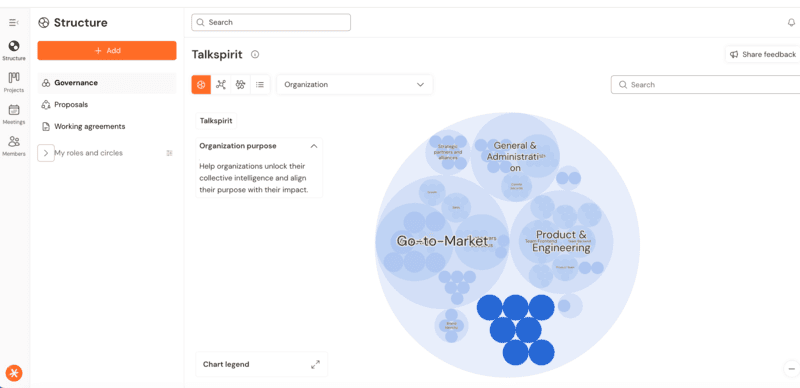

Map each tier to a specific role within your governance circle. Talkspirit’s role-based structure makes these boundaries explicit: each role has a clear purpose, domain of authority, and accountabilities. When a new AI use case is proposed, the team processes it as a “tension” in a governance meeting, the platform’s native workflow for surfacing and resolving organisational gaps. The decision framework isn’t a document someone writes and files away. It lives in the platform, attached to real roles and real processes, visible to everyone.

2. Make Accountability Visible, Not Implied

Deloitte’s report notes that some organisations discovered AI models had been deployed into production without formal oversight or monitoring, simply because no one had been explicitly assigned responsibility. This is what happens when accountability is implicit rather than structural.

What to do this week:

For every AI initiative in production or heading there, assign three explicit accountabilities: an “AI Owner” responsible for the tool’s performance and outputs, a “Data Steward” responsible for input quality and privacy compliance, and a “Human-in-the-Loop Lead” responsible for reviewing flagged decisions and edge cases.

How to operationalise it in Talkspirit:

Talkspirit’s Structure module lets you define these as formal roles within circles (teams, departments, or cross-functional groups). Each role has a documented purpose, domain, and set of accountabilities that everyone in the organisation can see. This isn’t a spreadsheet on someone’s desktop, it’s a live, searchable organizational structure. When a question arises about who approved an AI-driven action, the answer is one click away.

3. Build Cross-Functional AI Governance, Not a Committee

The report is clear: effective governance integrates with existing structures rather than running in parallel. Yet most organisations default to creating an “AI Ethics Committee” that meets monthly and produces reports.

What to do this week:

Create dedicated AI Governance Roles in your teams with representatives from IT, legal, operations, HR, and business units actively using AI. Give them real authority, not advisory status, and a clear purpose: to set AI policies, review high-risk use cases before deployment, and manage incidents when AI outputs cause harm or risk.

How to operationalise it in Talkspirit:

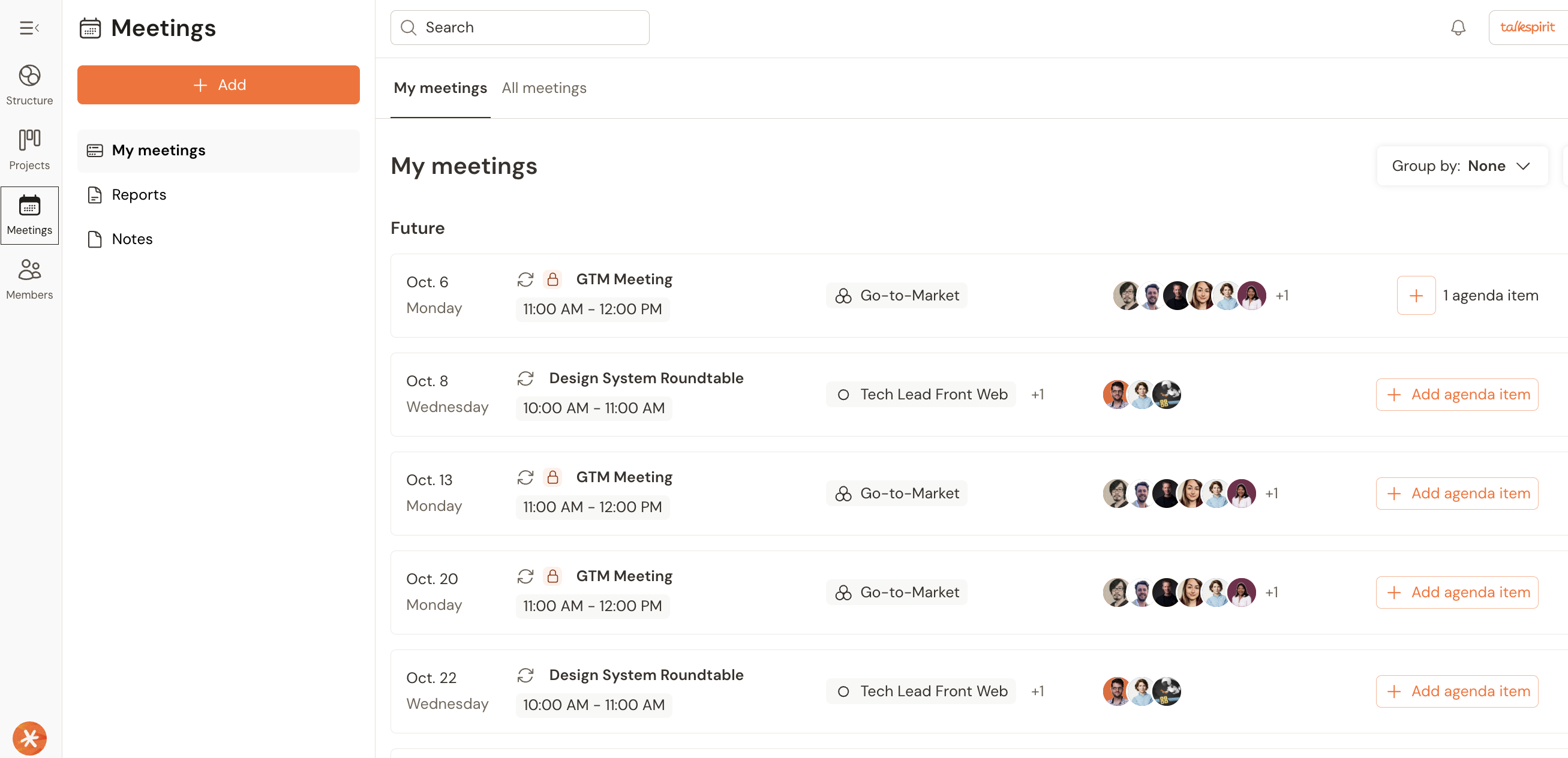

Create dedicated roles in the platform, define their purpose, domains and accountabilities. Use the built-in meeting workflow to process incoming tensions: a new AI tool that a team wants to deploy, a data privacy concern raised by an employee, a regulatory change that affects existing deployments. Each tension gets a clear owner, a next action, and a resolution date. The decisions and policies are documented and visible to the entire organisation.

4. Establish a Sovereign Data and Tooling Policy

Deloitte reports that 77% of companies now factor an AI solution’s country of origin into vendor selection, and 66% express concern about reliance on foreign-owned AI technologies. For European organisations, this isn’t a preference, it’s fast becoming a regulatory and strategic requirement.

What to do this week:

Conduct a quick audit of your current AI stack. For each tool, answer four questions: Where is the data processed and stored? What jurisdiction governs its use? Does it comply with GDPR and emerging EU AI regulation? Could you switch providers if geopolitical conditions change? Any tool that scores poorly should be flagged for review.

How to operationalise it in Talkspirit:

Talkspirit is designed, developed, and hosted entirely in Europe, with GDPR compliance and ISO 27001 certification built in. Using it as your organisational backbone means your governance structure, decision records, internal communications, and project management data reside within a European infrastructure. Governance isn’t just about having policies, it’s about having a verifiable, auditable record of decisions in a jurisdiction you trust.

5. Redesign Work Around AI, Don’t Just Add AI to Existing Work

84%

of companies have not redesigned jobs around AI capabilities , even as 36% expect significant automation within a year.

Meanwhile, 53% of organisations have considered flatter, pod-based structures, but only 16% have actually moved to implement them. The gap between knowing and doing is enormous.

What to do this week:

Pick one team or function where AI is already active. Map its current workflow end-to-end. Identify every task that AI handles or could handle. Then redesign the workflow from scratch, not “how do we add AI to what we do?” but “if we were building this function today, knowing what AI can do, how would we structure it?”

How to operationalise it in Talkspirit:

Using circles and sub-circles, you can model new organisational structures alongside existing ones, testing pod-based, flat, or hybrid models without disrupting current operations. Define new roles that explicitly account for human-AI collaboration: an “AI Operations Lead” who manages the team’s AI tools, a “Quality Reviewer” who audits AI-generated outputs, a “Process Designer” who continuously optimizes workflows. Each role’s purpose, accountabilities, and decision authority are visible to everyone on the team.

The 30-Day AI Governance Sprint

For leaders who want to move from reading to doing, here’s a practical sequence you can execute starting today.

Week 1 — Audit and Assess

Map all AI tools and agents currently in use or planned. Classify each by decision tier (autonomous / human-approved / human-only). Identify where accountability gaps exist. Document which tools meet your sovereignty requirements and which don’t.

Week 2 — Structure and Staff

Create your AI Governance roles in Talkspirit. Define the role’s purpose. Assign domains and accountabilities. Schedule the first triage meeting to process the backlog of tensions from Week 1.

Week 3 — Policy and Process

Draft your AI decision framework, data sovereignty policy, and incident escalation protocol. Process each through the governance circle. Publish them as living documents within Talkspirit so every employee can access them.

Week 4 — Activate and Iterate

Launch the governance model with one pilot team. Use Talkspirit’s project management functionalities (Kanban boards, task tracking, goals) to track implementation. Set a 90-day review cadence using governance meetings to refine policies based on real-world feedback.

The Organisational Clarity Advantage

Deloitte’s report ends with a powerful observation: the most successful companies won’t be those with the biggest AI budgets, but those that build AI into the foundation of how they operate, compete, and grow.

That foundation is organisational clarity and alignment: knowing who’s responsible for what, where decisions are made, how authority is distributed, and what happens when something goes wrong. In a world where autonomous agents are about to become a standard part of the enterprise workforce, that clarity isn’t optional. It’s the difference between scaling AI safely and scrambling to contain the consequences.

Talkspirit was built for exactly this moment. Not as an AI tool, but as the European organisational operating system that makes AI governable, by making structure visible, accountability explicit, and decision-making transparent.

79% of companies lack mature AI governance. They don’t have a technology problem. They have an organisational clarity problem. And that’s a problem you can start solving today.

Source quoted in this article: Deloitte, “State of AI in the Enterprise: The Untapped Edge,” January 2026. Based on a survey of 3,235 director-to-C-suite leaders across six industries and 24 countries.

The organisations that will lead in the agentic era are not the ones moving fastest, but the ones operating with the most clarity.

Start your organisational clarity journey today. Book an AI-Governance Clarity Diagnostic. In 20 minutes, we identify your key governance and AI readiness gaps, and show you how to turn your structure into a strategic advantage.

Révélez le potentiel de votre organisation.

Nous sommes là pour répondre à toutes vos questions et vous accompagner dans vos projets.

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)